The Silver Challenge - Lecture 10

Woohoo! I have completed the Silver Challenge! Today we wrapped up the course with a review of different RL algorithms used to play classic games like backgammon, Scrabble, chess and Go [1]. I’ll briefly explain some of the intuition behind the TreeStrap algorithm, presenting the state of the art (at least in 2015), and then I wanted to close by highlighting some of the techniques Silver used in his teaching that I thought were really effective [1].

The TreeStrap Algorithm

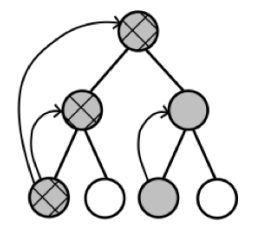

The TreeStrap algorithm is a way of combining search and learning strategies in an information-rich method [1]. TreeStrap a search process to compute the minimax values over a search tree at all nodes on the tree [1]. These minimax values are then used to update the value function at all points on the tree, not just at the root node [1]. This idea is presented in Figure 1, where we can see that the minimax values are propagated backwards through the tree towards the root [1]. TreeStrap is different from TD root or TD leaf because it propagates the new minimax values to all the nodes in the tree, not just to the root [1].

Figure 1 - Source: [2]

Silver explains that, even though he believed that the TreeStrap algorithm was a very effective method of playing games using a linear value function, he actually found with his work on AlphaGo that neural networks could also be extremely effective [1]. He uses this experience as an example that we as researchers should constantly be challenging our assumptions and testing them further [1].

The State of the Art (in 2015)

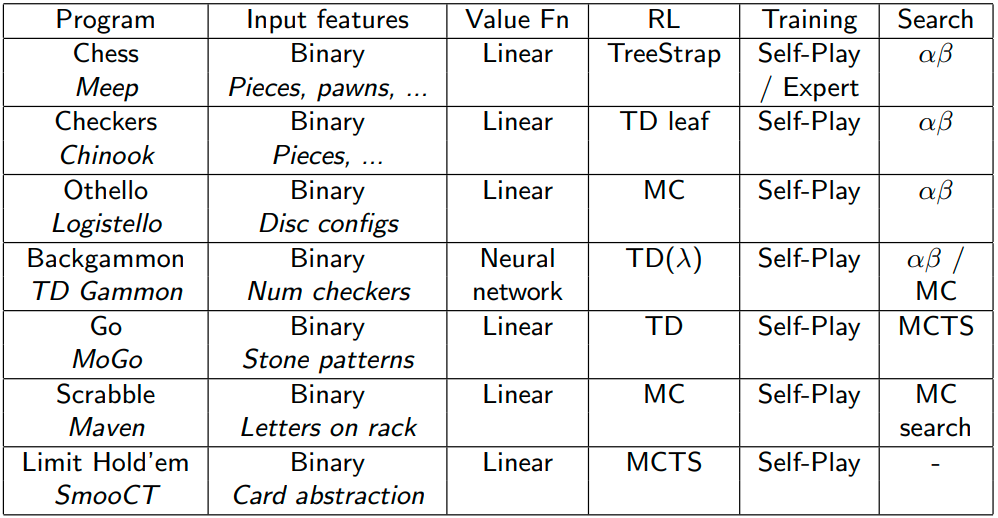

This lecture closed with a slide that showed the state of the art algorithms for playing classic games (not Atari or Starcraft), as of 2015. I think more neural networks have been applied since then (most notably with AlphaGo), but it was still interesting to see how well RL could perform in playing some classic games without using neural networks as function approximators [1]. In fact, Silver argues in this lecture that there are applications where neural networks (at least in 2015) were not the best function approximator for some of the more deterministic games in this repertoire [1].

Figure 2 - Source: [2]

Teaching Strategies

Finally, I just want to close by listing a couple of Silver’s teaching strategies that I found quite effective and would like to use myself in the future:

-

Tell a Story - Silver always added narration that linked the ideas in each lecture together. He told us the outline of the story at the beginning of each lecture, often placing the current lecture in the context of others. Then at each transition in the lecture, he would link ideas together, which helped his audience to track the flow of the concepts.

-

Use Repetition - Silver was a master of structuring ideas so that we could see repeated forms in the structure and link different concepts together. For example, when he taught Monte Carlo and TD planning methods, he set them both up as different forms of computing the update for the policy function parameters, which helped us to see how these methods were the same and different. The repetitive structure really helped me to remember ideas from previous lectures and to understand the mechanism of how different methods worked.

-

Explain the Mechanisms Behind the Math - Silver would always narrate the way an equation worked. That is, he would highlight in words what different parts of any equation were doing - sometimes they were pulling the update in a certain direction, sometimes they were measuring the similarities of two distributions or comparing the weights of two properties. But Silver always gave his students an intuitive way of understanding how the equations worked, which I think is part of the secret sauce of being a great researcher.

-

Use Clear Language and Examples - Silver always had 1-3 great examples to make his points concrete in every lecture, and they made all the difference. He was good at only using the minimum necessary technical vocabulary to convey his points, and then he focused on giving students an intuitive, conceptual understanding through concrete examples.

-

Repeat Students’ Questions - Silver was excellent at listening to his students’ questions and very quickly finding ways to respond to them. One thing in particular that he did was to repeat the question to the class - I love it when professors do this because it helps bring everyone into the discussion, and prevents us from zoning out or losing the thread because we couldn’t hear the original question.

-

Don’t Add Superfluous Information - Silver’s slides were very clean, and every piece of information on them was explained and important to the discussion. I really appreciated this because extra information can really make it unnecessarily harder to understand the core concepts.

References:

[1] Silver, D. “RL Course by David Silver - Lecture 10: Classic Games.” YouTube. 29 Jul 2015. https://www.youtube.com/watch?v=kZ_AUmFcZtk&list=PLqYmG7hTraZDM-OYHWgPebj2MfCFzFObQ&index=10 Visited 15 Aug 2020.

[2] Silver, D. “Lecture 10: Classic Games.” https://www.davidsilver.uk/wp-content/uploads/2020/03/games.pdf Visited 15 Aug 2020.